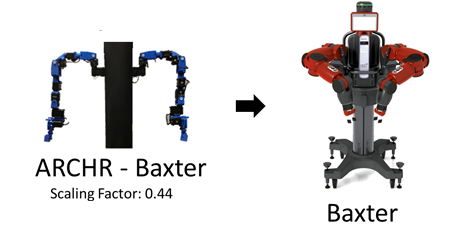

Archr Baxter

Contents

Overview

The ARCHR controller maintained accurate control of Baxters end effector within a sphere of 4cm. It was purchased by George Mason University in Spring 2014 and a major robot in the ARCHR project. Our project utilizes ROS and Baxter SDK scripts together with our stereovision system to offer an immersive controller. Its use could be extended in the future by adding realistic barrel vision, vibrational feedback, as well as implementing the Baxters features available from the sonar, IR, wrist-camera, and built-in front-facing camera. See rethink robotics webpage on Baxter and its various features here.

Tutorials

Code

- Setting up the code

1: Download the code from the Lofarolabs Github ARCHR Baxter branch here by running this command from a terminal

$ git clone https://github.com/LofaroLabs/Baxter

2: Navigate to the location where the files were downloaded (the ARCHR folder) and edit the startController bash script using a text editor. Change the file path so that it reflects the current directory.

- One way of doing this is:

$ cd /home/archr/projects/Baxter/ARCHR $ gedit startController.sh

- The lines to change will be line 9 and 11, so that if your ubuntu username is "student" and the files are located under downloads

/home/archr/projects/Baxter/ARCHR/archr_team.jpg

becomes

/home/student/Downloads/Baxter/ARCHR/archr_team.jpg

- For line 16, make sure to only change the username and keep the ros_ws attached to the end.

3: Repeat this process for the controllerL, controllerR, gripperR and serverArms.sh bash files as well.

4: For the stereovision code, set the streaming IP to your current IP in the left.py and right.py files.

- Also Navigate to the Stereovision folder within the base ARCHR folder, and change the file paths in the startReceive and startStream.sh files similar to steps 1-3 above.

- Running the code

1: Connect a LAN cable from Baxter to your computer, and Disable Networking in Ubuntu as shown in the picture below.

2: Run the startController bash script by the command:

$ bash startController.sh

- You should noticed two new terminals have opened. These will be where you check the connection with the physical controller of dynamixels and will also run the actual controlling scripts.

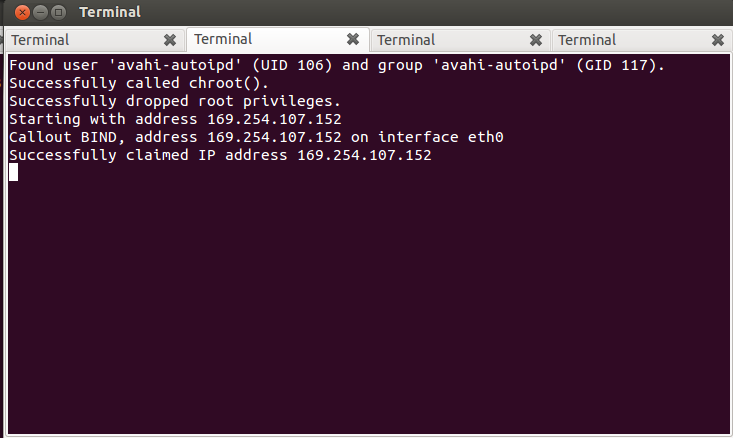

- In the terminal with 4 tabs open (without the colored text), as shown below, you should see the first read "Firewall stopped...", the second read "Successfully claimed IP..." (for the last line), the third read "Waiting to hear from hubo-daemon... Ready!" and ">> hubo-ach:" (last two lines), and the final terminal confirm connection with the dynamixels.

- For successfully communicating with Baxter the first two terminal tabs should read that the firewall has been disabled and second that an IP that can communicate with Baxter through LAN has been assigned. (This could be different for you if you are communicating over wireless). The third tab should indicate the joint-streaming interface (hubo-ach) has started, and the last tab that the dynamixel servos making the physical arm that will control Baxter have been detected. If this is not the case for any of these tabs, please close all terminals and restart the script (or individually rerun the step that failed).

- For more help read the code comments and documentation or check Rethinks website for Baxter code help or email [mannanjavid@gmail.com mannan].

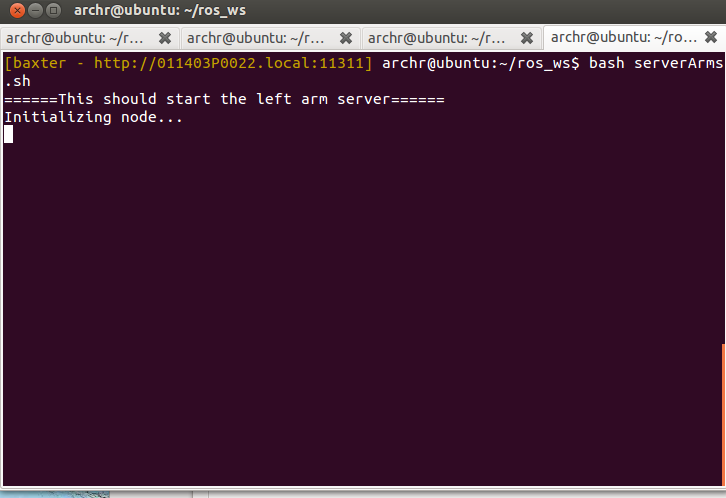

3: Finally you are ready to control Baxter with the controller! In the second terminal that opened, shown below, run the server, arm and gripper bash scripts (run the server before the arm).

- Note 1: The ros_ws workspace must have loaded and show in an orange color (ROS environment has been loaded for Baxter)

- Note 2: The server must be run first. The controllers for each arm are seperate and must be run manually but in any order desired. Running the gripper last is recommended.

- Note 3: for the gripper, a calibration is required manually upon every boot of Baxter before using the gripper. Run the Baxter gripper_keyboard example located within the ros_ws/src/baxter_examples/scripts directory and then type the key to calibrate the appropriate gripper.

4: Congrats by now you should have realized Baxter started moving and possibly done something he shouldn't have. This now leads you down the path of modifying the script sendACHData.py (in ARCHR/scripts folder) to account for the angle offsets present in your servos and perform further debugging.

- Stereovision System

1: By default the stereovision system is disabled. This can be disabled by uncommenting the lines below in the sendACHData.py file and rerunning the sendACHData.py (dynamixels) portion of the code.

'''Oculus Rift stuff ovr_Initialize() hmd = ovrHmd_Create(0) hmdDesc = ovrHmdDesc() ovrHmd_GetDesc(hmd, byref(hmdDesc)) print hmdDesc.ProductName ovrHmd_StartSensor( \ hmd, ovrSensorCap_Orientation | ovrSensorCap_YawCorrection, 0 )'''

'''ss = ovrHmd_GetSensorState(hmd, ovr_GetTimeInSeconds()) pose = ss.Predicted.Pose tiltlist.append(rad2dyn(pose.Orientation.x*np.pi)); panlist.append(rad2dyn(pose.Orientation.y*np.pi)); tilt = np.mean(tiltlist); pan = np.mean(panlist); '''

'''ss = ovrHmd_GetSensorState(hmd, ovr_GetTimeInSeconds())

pose = ss.Predicted.Pose

tilt = rad2dyn(pose.Orientation.x*np.pi);

myActuators[8]._set_goal_position(tilt);

pan = rad2dyn(pose.Orientation.y*np.pi);

myActuators[9]._set_goal_position(pan);

tiltlist.append(rad2dyn(pose.Orientation.x*np.pi));

panlist.append(rad2dyn(pose.Orientation.y*np.pi));

panlist.pop(0);

tiltlist.pop(0);

tilt = np.mean(tiltlist);

pan = np.mean(panlist);

#myActuators[1]._set_goal_position(tilt);

myActuators[2]._set_goal_position(pan);

print "tilt %d" %tilt

print "pan %d" %pan '''- Note1: Make sure the two webcams are plugged in and being picked up by the left.py and right.py streaming scripts. Test this by running the left.py and right.py locally and opening the webcam in a browser.

- Note2: Navigate to the Stereovision folder and run the startStream.sh and startReceive.sh files on the streaming and receiving computers to easily run them after the IPs have been set in the code.

- Note 3: If the sendACHData.py script scanning for dynamixels determines that the oculus sdk has been opened and then suddenly closed, please run the Oculus test script located in the Baxter/ARCHR/scripts folder. Confirm it is streaming pitch/yaw/roll data by moving it and checking if the values are changing. If not check for further help in the setting up Oculus dependencies guide.

Enjoy using this controller!